|

There are more than 100 different teams from all the various departments in the company. Tenants in SuperCollider are grouped into self-organized teams.

SuperCollider, which I referred to earlier, has thousands of active users. They also need a working knowledge of the underlying systems. To restate: data engineers cannot simply write SQL. And of course, you cannot simply ask Tableau admins to increase the maximum refresh duration time, because this request would affect the performance of the refreshes of other Tableau tenants, as well. Soon, the daily report refreshes start failing because the data that feeds the report becomes so big that a) the SQL query that produces it started running slowly, and b) there’s not enough network bandwidth to transfer the result within the Tableau-defined limits for refresh duration. However, over time, the source data grows exponentially (as expected).

Initially, the report works and is responsive. However, the data source - a Presto - is deployed in another geo location. Let’s look at a simple example: imagine you implement a Tableau report that is refreshed daily. The bottom line is that one cannot just write code that does not account for the underlying technologies, systems, and processes. All three roles need to work directly with the underlying data infrastructure. The common denominator is the data engineering work - data integration and transformation. Data scientists work on model training, so they spend a lot of time in data preparation. Data engineers use SQL and scripts for transformation and data integration. Data analysts are supposed to drag and drop in an interactive business-intelligence (BI) tool and occasionally write SQL queries. So why is data engineering the focus of this article? Well, let’s look at what is common across roles. So, we decided to make data engineering more efficient. Given that we have hundreds of full-time data engineers, analysts, and data scientists, it made sense to optimize their day-to-day work and help VMware navigate faster in this data-driven world.

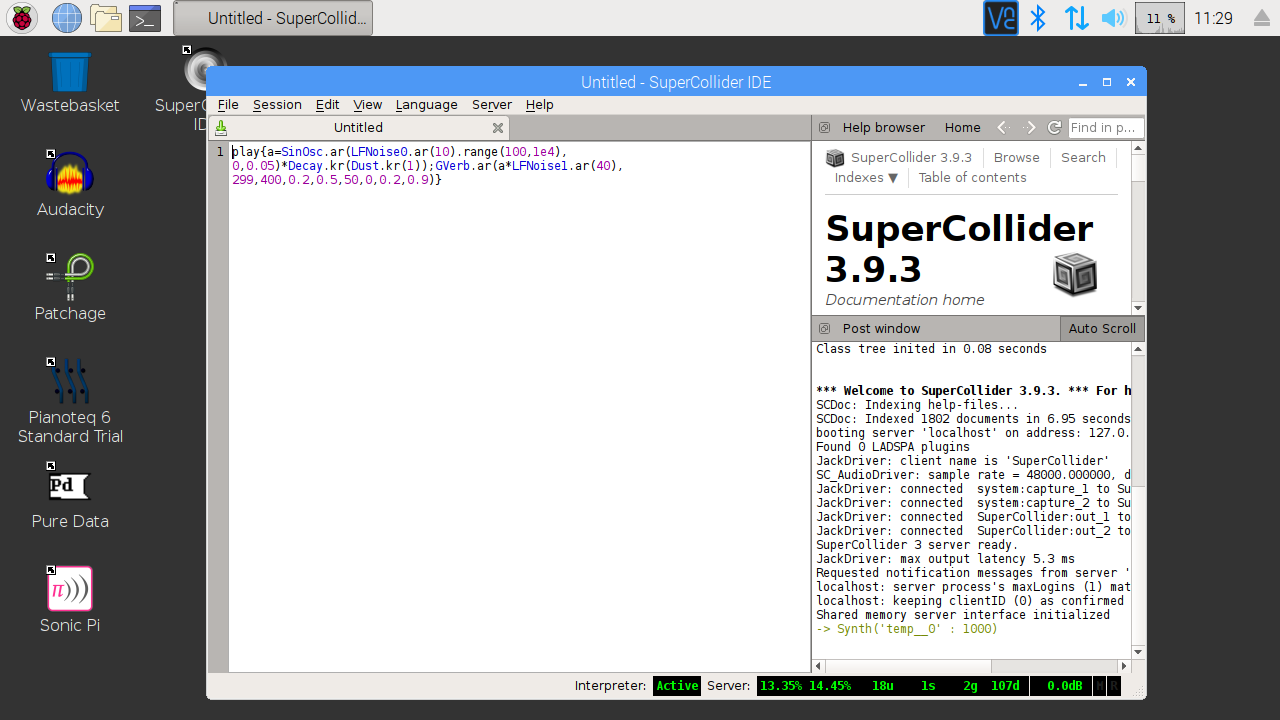

These new types of problems made our work far more inefficient than it was with good old ACID SQL transactional RDBMS offered by traditional data warehouses. parquet files on the storage while Presto read them, which could result in query errors or inconsistent results returned by the SQL query…and much more. Or that when querying data from Presto, somebody else might be changing the. For example, with disparate sources and heterogenous infrastructure, data engineers and data analysts had to learn that a single query against Amazon Redshift could indefinitely block all other queries and pipelines. These days, they also need to understand things like HDFS, S3, AWS, and Apache Parquet. A decade ago, a data engineer needed excellent SQL knowledge and some scripting. This meant that to maintain high-quality, consistent datasets, data engineers had to think about enormous amounts of data - but also needed to understand the underlying infrastructure in deep technical detail. To accommodate this massive growth, traditional data-warehousing solutions gave way to data lakes, which were cheaper to scale. Ninety percent of the data that exists in the world today was created within the last three years. This post will explain the genesis of this project, as well as the results we’ve seen using VDK. We found it so useful that we decided to release it as an open-source project. It is a framework that helps data engineers develop, troubleshoot, run, and manage data-processing workloads (data jobs). VDK was initially built as part of a self-service data analytics-platform called SuperCollider. We recently released the Versatile Data Kit (VDK), which we built to boost data engineers’ efficiency.

The more time they spend on non-core activities, the more inefficient they become. All those non-core activities contribute to the total cost of the end product, whether it is an interactive dashboard or a new dataset. They also need to acquire and maintain a deep technical understanding of the underlying infrastructure. Data engineers invest time not only on activities that require their core data-engineering skills (such as SQL and Python) but must also devote focus to tasks that require system knowledge - knowledge about configuration, monitoring, testing, deployment, and automation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed